An AI OS from a design perspective

AI chat is creating an opportunity for a new UX paradigm, here I’ll walk through the previous ones to explain why and put it in historical context.

The first screen based interfaces combined the input and output into the same UI element, with a line at the bottom with a specific character set that prompted you for a command. And there were a bewildering array of command prompts, which reflected the kind of heterogenous design environment which is a hallmark of any early period of technology, from pre-smartphone cellphones to commercial aircraft before the jet age:

Early Operating Systems:

A> – CP/M

B> – CP/M (drive B)

C:\> – MS-DOS / PC-DOS

] – Apple DOS / ProDOS

> – TRS-DOS

1> – AmigaDOS

Classic Unix-Like Shells:

$ – sh (Bourne shell), ksh, bash (regular user)

% – csh, tcsh, zsh (default for some users)

hostname% – tcsh (custom)

# – bash, zsh (root user)

> – fish shell

Microsoft Systems:

C:\> – MS-DOS / FreeDOS / CMD.exe

C:\Windows\System32> – Windows CMD.exe (example)

PS C:\> – Windows PowerShell

> – Windows Terminal (varies by shell)

$, %, etc. – WSL (inherits from Linux shell)

Apple Systems:

$ – macOS Terminal with bash (pre-Catalina)

% – macOS Terminal with zsh (Catalina+)

> – fish shell on macOS

Linux / BSD Systems:

$ – bash shell (regular user)

# – bash shell (root user)

% – zsh shell

> – fish shell

[user@host dir]$ – typical bash prompt (customizable)

Mainframes & Others:

$ – VMS / OpenVMS

READY – IBM TSO (z/OS)

> – RSTS/E

@ – TOPS-10 / TOPS-20

Scripting / Development Shells:

>>> – Python REPL

irb(main):001:0> – Ruby IRB

> – Node.js REPL

*> – Lisp REPL (CLISP)

In [1]: – Jupyter console

Embedded / Networking Devices:

Router> – Cisco IOS (user exec mode)

Router# – Cisco IOS (privileged exec mode)

=> – U-Boot (bootloader)

~ # – BusyBox shell (root in embedded Linux)

This interface paradigm can be summed up in the following schematic.

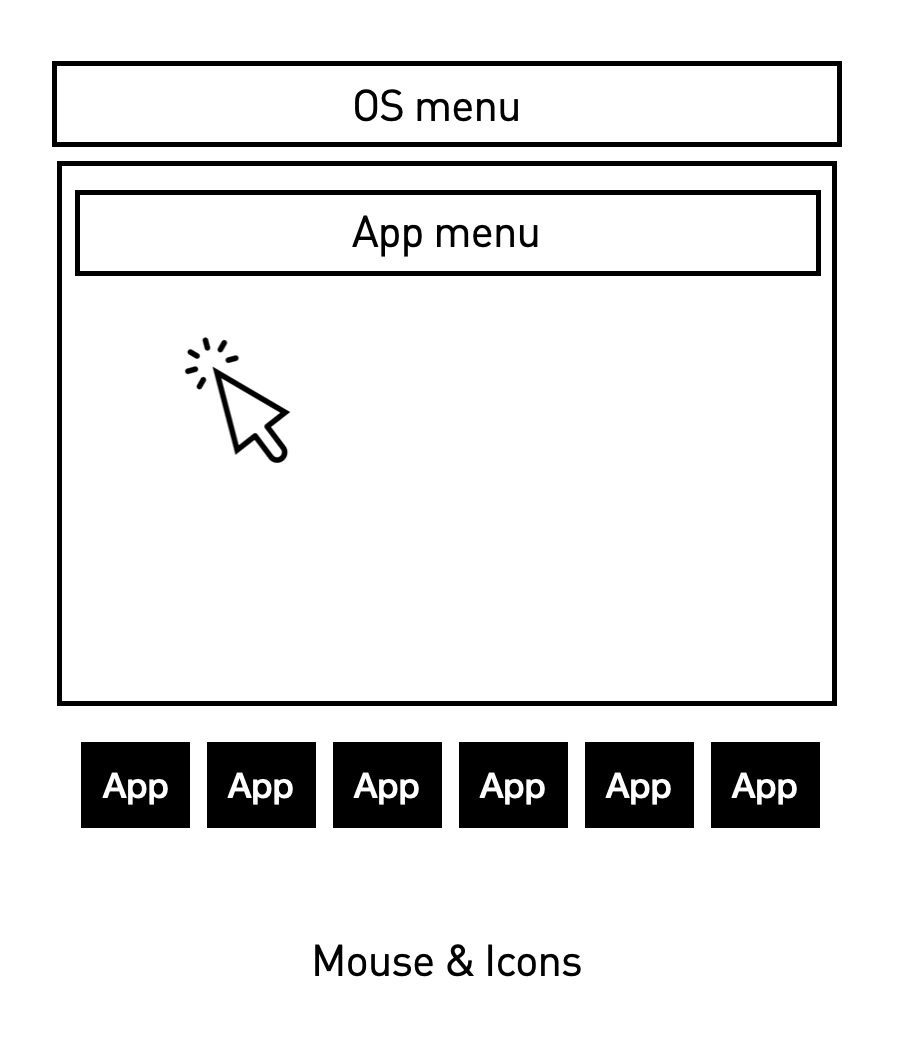

Apple introduced the first commercial version of Xerox Parc’s mouse & icons, event driven, Graphical User Interface (GUI) Operating system interface in 1983 and it became the paradigm for operating systems, as Microsoft and Unix layered GUIs on top of their command line OS’. The following schematic shows a GUI.

With a GUI, you didn’t need to memorise what applications or what commands were possible as they were displayed in a menu. A button’s text or icon described the capability.

This solved an important UX problem known as discoverability, where messing around led to finding out. In terms of being able to use a computer, it democratised operation, leaving only computer programming to the domain of experts (who persisted with command line interfaces).

When the web was developed on top of the internet it created a graphical application called the browser which allowed documents on other computers on the internet to be used with the same ease as a GUI based operating system allowed access to local ones. The key UI components were the url bar and hypertext links, which allowed any document, stored anywhere in the world to be retrieved directly or from a pointer within another document.

The early web had neither images or forms, but when forms were introduced, desktop applications could be simulated using them and this led to all sorts of software being delivered from anywhere in the ‘cloud’ via a browser. This included browser based access to other internet protocols such as email.

These software applications were dlivered as services within a browser and created a UX paradigm like the below, where the URL bar eventually merged with search allowing for app discovery and signup, where application menus would be rendered within the browser window using the GUI paradigm.

Although the very first web browser allowed for publishing, commercial browsers were only for form filling and delivery of web pages, not publishing them. Another web protocol, FTP was used for loading files onto servers.

An online diary program called blogger was the first to fully merge publishing into the browser, turning the web into a two way, read-write medium. The two-way, read-write web became known as Web 2.0.

An important aspect of blogging was that it consisted of a series of individual posts that could be displayed reverse chronologically into the same web page and this became know as a feed. At the same time, Moreover ( which I co-founded) was know as the web-feed company as it turned updates from thousands or news sources into a single feed, like a weblog, I co-created the standard, RSS, to formalise the structure of these feeds.

With the blogger team (who later created Twitter) we created a project called Newsblogger, with combined searching news links and posting them into a single feed and this became the UX paradigm for social media. Blogging and RSS combined to create the news feed, which when combined with many-to-many user subscriptions created the social media UX paradigm shown in the schematic below.

The next UX paradigm evolved when smartphones changed mouse movement to direct touch and swipe and Apple introduced a new language for online applications which were not browser based and had to be installed via an app store. Although smart phones drove online use from the office and home to truly personal and ubiquitous, everywhere, they were a retrograde step in may ways, gatekeeping and restructuring development and extracting rents for the app store, in Apple’s case.

Even some of the most iconic mobile app inface developments such as swipe left and right for dating apps were actually just copies of existing desktop UX. Hot or Not had developed online dating with the same UX intent (where the choice was combined with the submit action), before mobile.

AIO chat is the next major paradigm UX shift. I believe is still evolving and that it creates an opportunity for a new OS, which I am collaborating on.

The current state of AI chat is in many ways another retrograde step, this time all the way back to the command line, where a text prompt (we even use the same terminology for the text entry as command line interfaces) returns information that is mostly just text.

This is surely temporary. We are starting to see the prompt results become much richer, with image, chart and application generation inline, but the major impediments I believe are not technical but design.

With prompts themselves, prompt engineering veered down a path towards how to ask a question rather than how to discover interesting questions to ask. Not only is prompt engineering an engineers rather than a creative way of looking at the problem of AI chat interaction, it is tackling a problem that AI is already solving itself.

The best prompts now are quite simple, leaving AI to handle how to answer a question. Meanwhile AI chat suffers from the same problem from command lines to Alexa - how can i remember what to ask? Only this time the problem is exacerbated by the fact that AI is capable of practically anything making the task less one of remembering commands but a creative one of coming up with great questions or delivering an interface to discover them and then wrapping the resulting prompts as buttons.

AI buttons are different from, say Photoshop menu commands in that they can just be a description of the desired outcome rather than a sequence of steps (incidentally why I think a lot of agents’ complexity disappears). For example Photoshop used to require a complex sequence of tasks (drawing around elements with a lasso etc.) to remove clouds from an image. With AI you can just say ‘remove clouds’ and then create a remove clouds button. An AI interface is a ‘semantic interface’.

If you then created a marketplace for semantic tools for image editing you would be overwhelmed with options, so there needs to be a way to narrow the options. One way is to scope prompt options based on the active element in a conversation - so if its an image, only image editing buttons (prompts). Another narrowing would be to only show remove clouds if there are clouds in the image, but that still wouldn’t be enough - so there needs to be a core set of actions per object, with the ability to add favorite buttons and to have more appear as you type in the chat bar. So the chat bar shows menu options dynamically as you type.

Some prompts are persistent ones that apply to other prompts, such as what language to reply in and what writing style or format. These define the character of the responses and can broadly be defined as AI personas. The interfaces defining these characteristics (such as GPTs) feel embryonic or following a dead-end UX path.

With agents, which are essentially complex branching prompt tasks, much of the focus has been on creating these branching flows. Again, focus on creation of these structured, if-this-then-that, flows is engineering thinking and can be largely automated by AI itself as witnessed by the rise in popularity of the n8n tool which is in part driven by importing agent flows generated by AI.

I see agents interfaces as moving from the current ones that look like n8n flow charts to something completely different - stored prompts such as ‘book me a flight’. Because there is a branching flow and variables, these agent prompts are special because you might get asked some further questions to have the task fulfilled. The interface for this type of structured conversation, even where the conversation is a one-off one to build and configure the agent, is what is important.

All of these point to prompt discovery and UI as being a core component of an AI OS. For now, I can’t say much more except to point to a specific document type that I believe provides a clue for the way forward.

For decades, computers ran applications than often created documents. But then many academics wanted to run code within their documents rather than use static charts or equations. These ‘notebooks’ were popularised by Jupyter a decade ago, but they can be traced back to Mathematica’s development on code within documents, much earlier. A schematic of the ‘notebook’ UX paradigm is shown below.

I believe that the inversion of the docs-in-apps to apps-in-docs model is another component that is key to unlocking part of what forms an AI OS from a UX perspective and am working on bringing it to reality.