The Socio-economic Model of the AI Era, Part 2: AI

In part 1 of this multi-part series, where I’ll outline each component of an overall F2N thesis of where we are at in terms of technology and its implications, I covered the internet.

Its economic model relied on distribution-side network effects in sales and marketing. That required equity funding to cover the gap between growth and monetization. Without funding, early monetization would slow before the market was captured. This created a winner-takes-all arms race between other companies pursuing internet-leveraged growth.

I also discussed how the internet is causing similar societal disruption to the printing press, since the structural changes to communication are greater and how they might be resolved in future with small-world network topologies.

Now we can look at AI.

This is the big one, the information technology innovation that shows we are not merely in some Carlota Perez-style, later phase of industrialization but in a transition from industrial to information age that is as big as the one from agrarian to industrial.

We are living through a civilizational paradigm shift and like all transitions it will bring good things and bad, but we cannot uninvent things and the net gain will represent progress and will depend on embracing it with alacrity.

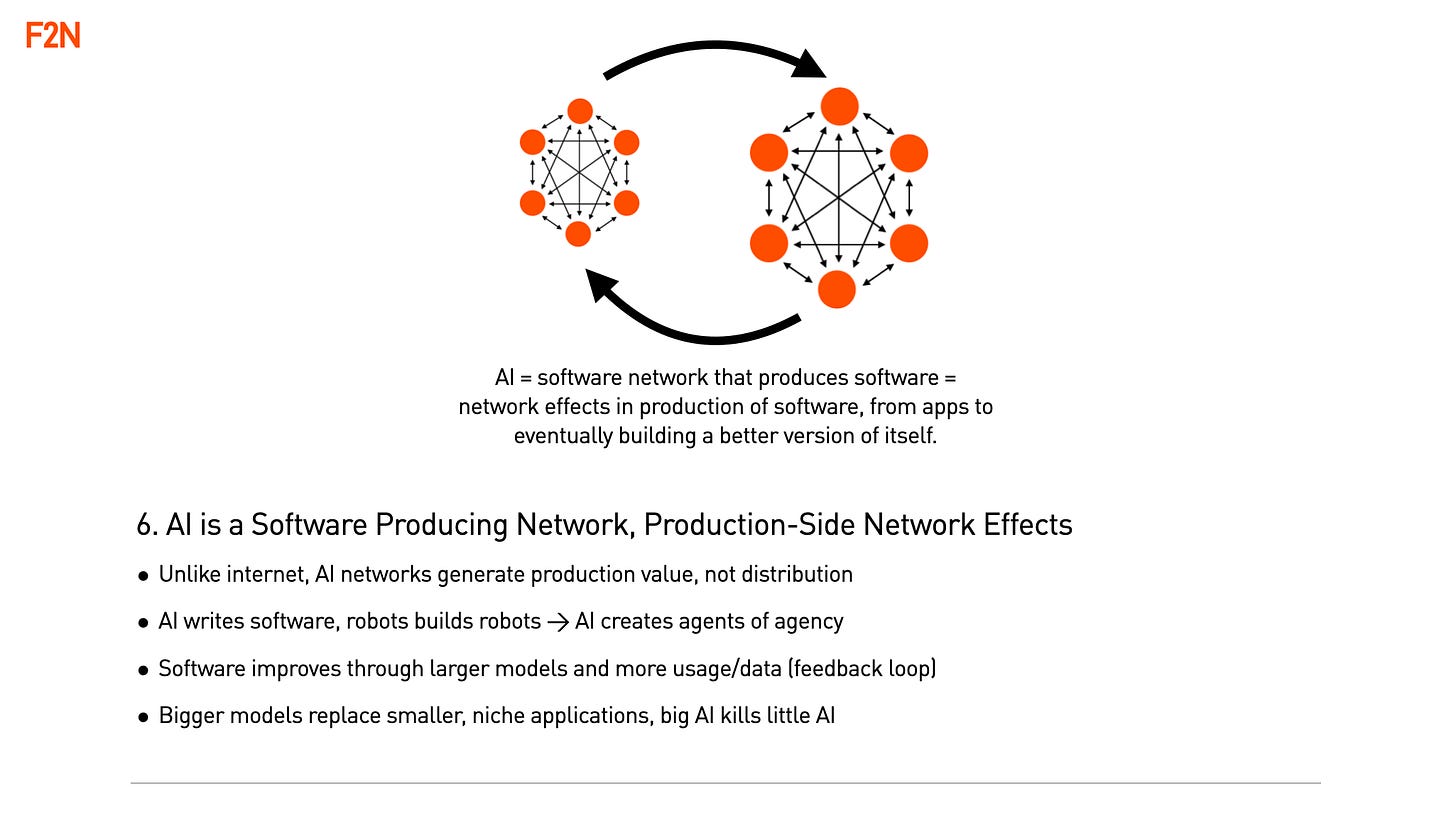

With AI, the software itself is a network, there are no algorithms or code in the traditional sense of codified, rule based ‘if-this-then-that’ logic. And noise is added which paradoxically achieves greater chance of accuracy in outputs. With noise added, outputs vary with the same input and so AI systems are statistical whereas boolean logic based ones are deterministic, this is a completely different way of processing information.

AI models based on modern, ‘attention’ based (broad, simultaneous knowledge) transformer machine learning are the skeleton framework of settings left behind when you remove the data that created them. This scaffold framework then becomes a sieve that understands the world and can generate a response as it sifts a question.

This understanding seems to be based on a hidden dimensionality (i.e. orthogonal sieves) where it may be that approaching a dimensionality that matches reality with 0% error would require massive or even infinite resources such that we are stuck in the world of statistical and partial hallucination when it comes to AI.

In such a scenario the architecture and interplay between deterministic if-this-then-that logic and AI systems will become critical.

Most modern technologies seem like magic to people who didn’t create them. AI seems like magic to those that did. To paraphrase the observation about the mystery of Quantum physics, ‘if you think you understand AI, then you don’t understand AI’.

Modern generative AI networks can output anything from a text summary to a video to an application itself. They can produce things that used to take a lot of time and effort and therefore lower the cost of production. In the extreme case where they create a better version of themselves they create a runaway process of self improvement that lowers production costs to near zero.

In other words, in AI the network effects are at the opposite end from internet ones - production rather than distribution and given this underlying structural difference it transforms the business ecosystem AI system operate in, from startups to incumbents and investors.

This also means that the real AI revolution will be driven by software generation not video, text or image content generation, since software than writes software will create a flywheel whereas software that creates words creates a spam fest.

When AI enabled agency is taken to the physical world through AI enabled automated manufacturing that in turn creates manufacturing automation (robots that build robots), this will also be a flywheel that will trigger a manufacturing runaway event.

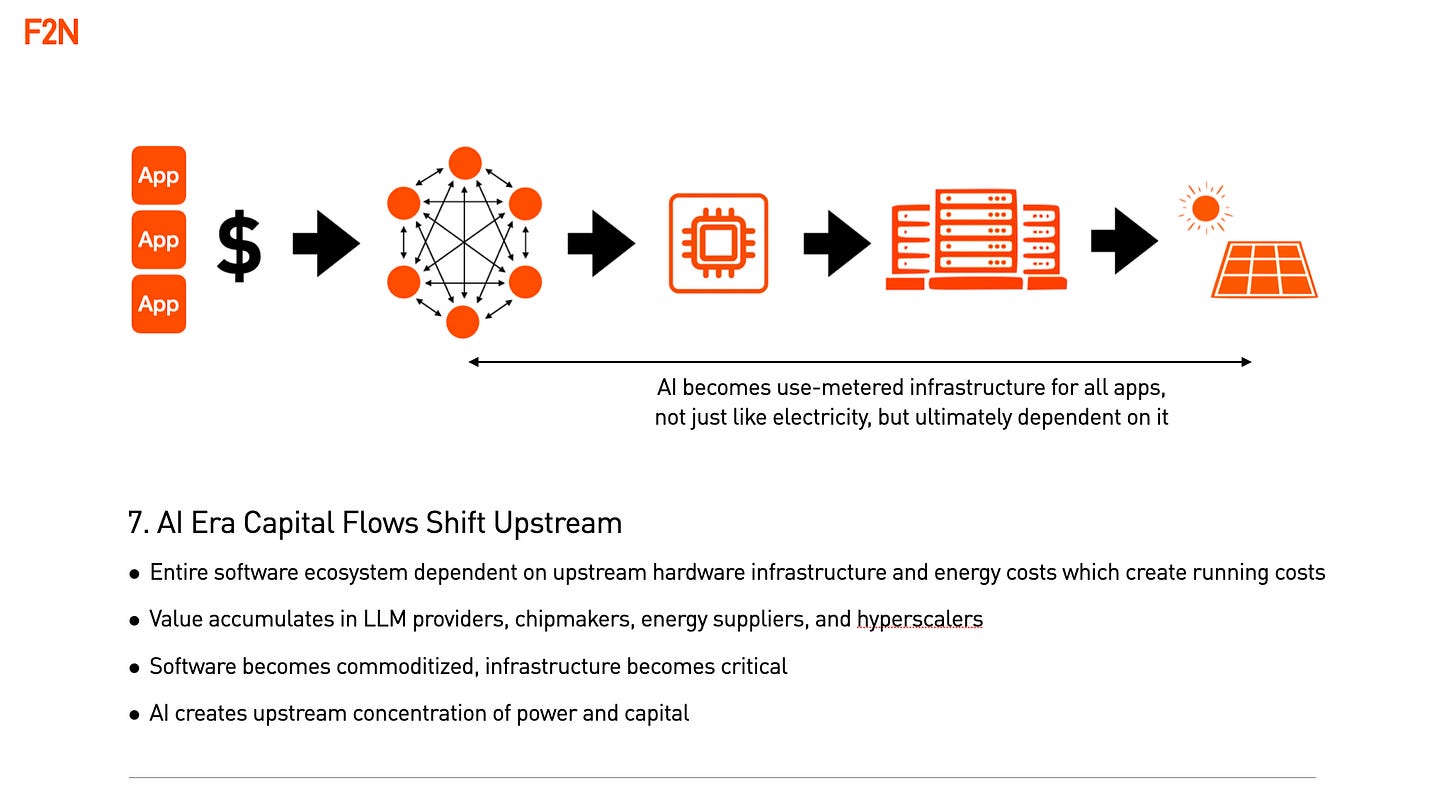

The biggest shift in AI development is that costs of production of applications drop to zero but the costs of running them doesn’t as apps often rely on connection to large AI models who charge on a per token use basis. This changes flows of capital upstream to large balance sheets and infrastructure providers: large frontier models developed by large tech companies; chips; data centers and eventually energy. AI costs are ultimately measured in token charges which in turn are a function of energy costs as fixed capital expenditure standardizes.

There is currently an arms race between China and the US in terms of infrastructure build out, on the assumption that for whoever gets to the AGI flywheel of automated self improvement, first, wins.

Because AI is general purpose technology with huge efficiencies not just in the marginal cost of production (shrink wrapped software on CD) or its distribution (internet SaaS) but in the actual cost of development of an application (AI coding), it will require huge customer bases for the productivity benefits to show. In this scenario the West may have to partner with India to create a sufficiently large rival bloc to China and South East Asia and we are already seeing OpenAI developing with the India market as a growth priority.

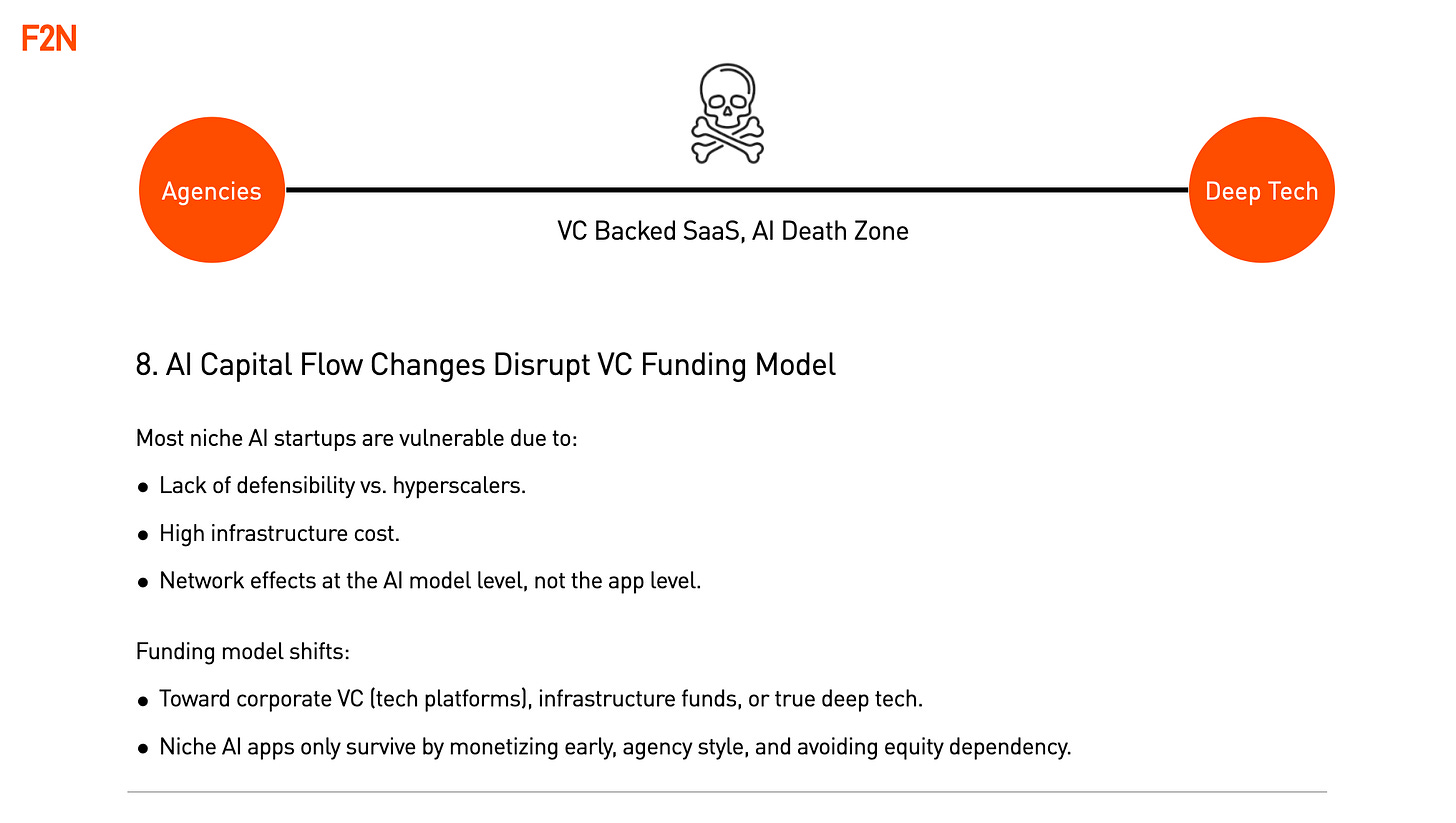

The structural differences in AI that create production side network effects and upstream value creation will create a barbell opportunity that may disrupt Venture Capital.

On one side of the barbell sits small agile teams leveraging a customer base for multiple products, monetized early and requiring less rounds of financing and operating in non winner-takes-all markets. These will look somewhat like creative agencies but producing applications not campaigns and if they do take venture financing it may only be one or two rounds.

On the other side of the barbell will be deep tech (real science backed by protected IP) infrastructure opportunities. These range from large language model innovations to novel chips or energy innovation or in-house development from large organizations based on models from their own data at volume. All will involve relationships with large balance sheet incumbents or governments and require large ticket rounds out of the reach of most VCs.

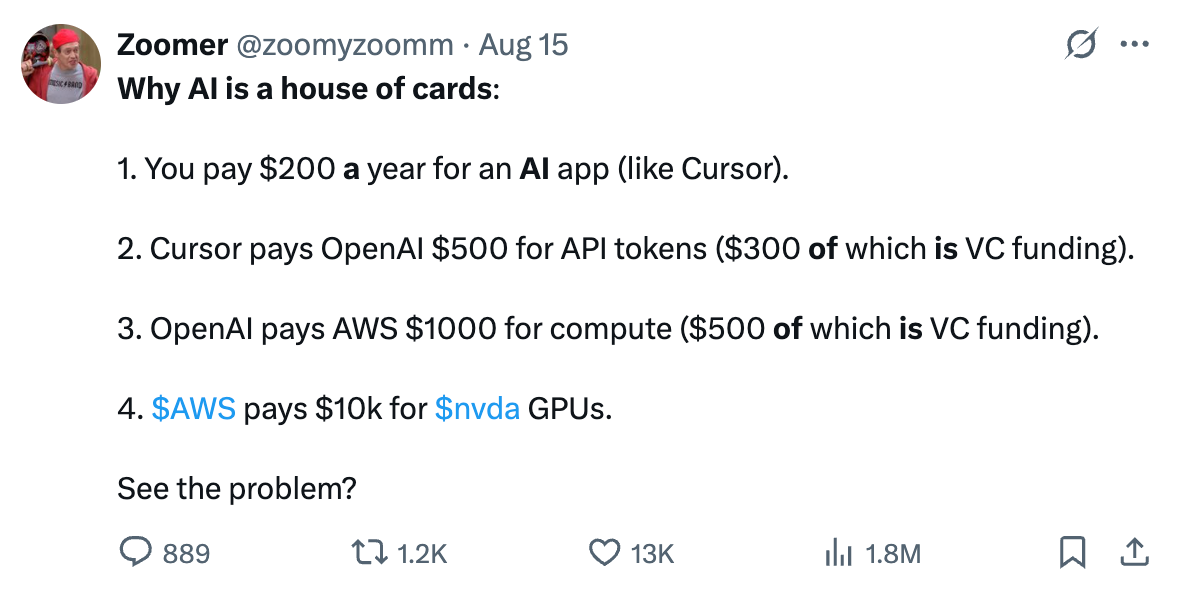

The death zone will be the bit in the middle - VC backed AI SaaS applications which are a house of cards in two aspects:

1. new features baked into an LLM can wipe them out in an instant.

2. applying the internet model of funding growth in advance of monetization onto an industry where all software costs money to run, you get the situation best captured in a satirical post on Twitter.

Unfortunately the death zone is where many VCs are investing in AI, because they are mapping the internet investment playbook onto companies that say ‘AI for X’ or ‘AI for Y’ where X and Y and everything else are ultimately owned, or partially owned through token spend, by the LLMs as an LLM is an ‘everything app’.

Cash drying up from either this pyramid scheme of venture backed, AI inference token spend or new innovations that create step changes in efficiency, could trigger a major correction that could spread into AI infrastructure, just as the disappearance of VC backed runway aka ‘burn rate’ caused the dotcom one.

However, no matter how harsh, that correction would not be permanent as the AI revolution itself is not going away. It could end up being much like the gap between the dotcom bubble bursting due to overinvestment while internet users were relatively low and the rebirth as mobile internet delivered them.